Here are the facts of the situation: It was early last summer. My wife was at the airport. The weather was hot, her shuttle bus late. She was texting with a family member who had come down with a cough. The family member had taken a Covid test and reported that it was negative. My wife, returning to the topic of her stressful day, typed the words “I need a,” intending to write “I need a drink.” But the autocomplete feature on her phone, interceding at the speed of thought—of artificial, digital thought—offered another suggestion: “I need a booster.”

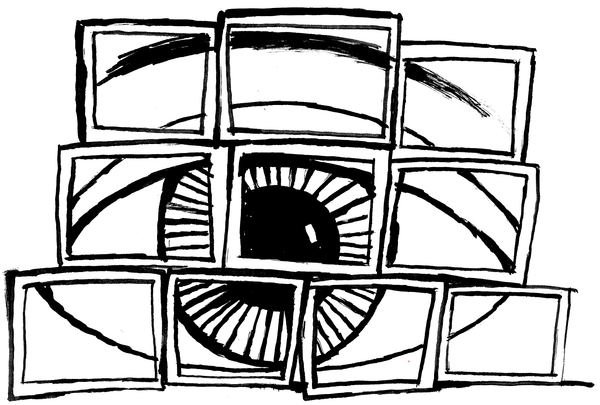

The nightmare is all right there, if you unpack it: The unsought programming of human behavior by pervasive technologies controlled by deceptive, obscure actors. They are deceptive, in this case, because autocomplete is sold as a kind of extension of your will, frontrunning your most probable verbal choices to save you time and ease your writing. The feature isn’t sold, so far as I’m aware, as a life coach, a public-health aide, or a physician. But it had behaved like one that day, and my wife, who notices everything, had caught it; she even took a screenshot. When she told me of the incident, it confirmed an emerging feeling that my own texts were often interrupted by digital guides less interested in finishing my thoughts than in foisting on me their own. Going with the eerie flow of life in a mediated, moderated age, I had chosen to ignore them. I had let them pass.

It’s a choice we face constantly these days, particularly in our dealings with technology and the information flooding through it: Should we indulge our suspicions about the machine and the murky power complex it represents, or should we dismiss them, in some cases or all?

The argument for repeatedly dismissing them—because they are aroused repeatedly—seems to me to grow weaker by the day, if it is even an argument at all. To return to my wife’s “I need a booster” experience: It may seem minor in isolation but is enormous if treated as one instance of something that likely happens countless times a day and for an unfathomable range of motives. Not many people I know would advocate for phones that plant hypnotic prompts in users’ heads during their innocent conversations with relatives, under the cover of helping the user type. The partisans of covert mind control are still quite few, I like to think, even when the cause in question is popular. The party of thoughtless quiescence in such matters is vast, however, and it rarely defends its inaction. It’s rarely asked to do so.